This is an old revision of the document!

Table of Contents

Install Slurm

What is a High-Performance Computer?

A high-performance computer (HPC system) is a tool used by computational scientists and engineers to tackle problems that require more computing resources or time than they can obtain on the personal computers available to them.

What is a HPC (High-Performance Computer)?

* Computers connected by some type of network (ethernet, infiniband, etc.). * These computers is often referred to as a node. * Several different types of nodes, specialized for different purposes. * Head (front-end or/and login): where you login to interact with the HPC system. * Compute nodes (CPU, GPU) are where the real computing is done. Access to these resources is controlled by a scheduler or batch system.

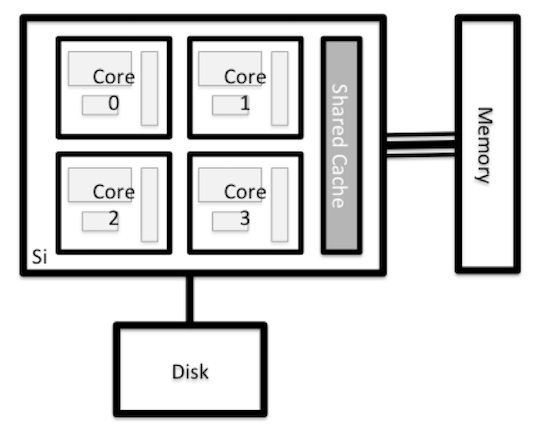

HPC architecture

Nodes

Parts of HPC system

- Servers:

- Server Master: master1, [master2 (Backup)]

- Server login

- Server accounting: mysql server

- Nodes: Physical or/and VM

- Storage: (CEPH, Lustre, etc.)

- Scheduler: Slurm, torquePBS, etc.

- Applications: Monitoring, software control, etc.

- Users database: OpenLDAP, AD, etc.

Storage and file systems

- Lustre: is a parallel distributed file system.

- Spectrum Scale: is a scalable high-performance data management solution.

- BeeGFS: was developed for I/O-intensive HPC applications.

- OrangeFS: is a parallel distributed file system that runs completely in user space.

- Ceph: that offers file-, block- and object-based data storingon a single distributed cluster.

- GlusterFS: has a client-server model but does not need a dedicatedmetadata server.

Scheduler

In order to share these large systems among many users, it is common to allocate subsets of the compute nodes to tasks (or jobs), based on requests from users. These jobs may take a long time to complete, so they come and go in time. To manage the sharing of the compute nodes among all of the jobs, HPC systems use a batch system or scheduler.

The batch system usually has commands for submitting jobs, inquiring about their status, and modifying them. The HPC center defines the priorities of different jobs for execution on the compute nodes, while ensuring that the compute nodes are not overloaded.

A typical HPC workflow could look something like this:

- Transfer input datasets to the HPC system (via the login nodes)

- Create a job submission script to perform your computation (on the login nodes)

- Submit your job submission script to the scheduler (on the login nodes)

- Scheduler runs your computation (on the compute nodes)

- Analyze results from your computation (on the login or nodes, or transfer data for analysis elsewhere)

Slurm:

- Is an open source, and highly scalable cluster management and job scheduling system.

- Requires no kernel modifications for its operation

- Is relatively self-contained.

Slurm has three key functions

- It allocates exclusive and/or non-exclusive access to resources (nodes) to users for some duration of time so they can perform work.

- It provides a framework for starting, executing, and monitoring work on the set of allocated nodes.

- It arbitrates contention for resources by managing a queue of pending work.

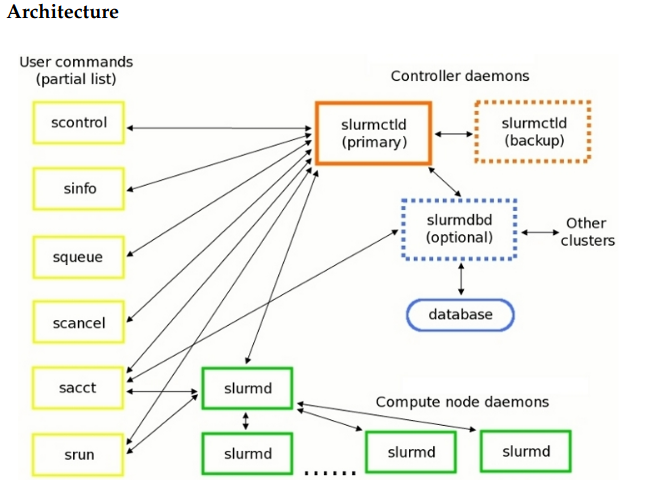

Slurm architecture

Commands:

- scontrol: is the administrative tool used to view and/or modify Slurm state.

- sinfo: reports the state of partitions and nodes managed by Slurm.

- squeue: reports the state of jobs or job steps.

- scancel: to cancel a pending or running job or job step.

- sacct: is used to report job or job step accounting information about jobs.

- srun: to submit a job for execution or initiate job steps in real time.

Applications

- Lifecycle management tool: Foreman or PXE server [PXE-DNS-DHCP-TFTP]

- Monitoring services: Nagios, Icinga, etc.

- Monitoring resources (menory, CPU load, etc): gaglia

- SSH server: openssh

- Central control configurations server: Puppet, ansible,

- manage (scientific) software: Easybuild

- Environment Module System: LMOD

- Central users database: OpenLDAP, Active Directory, etc.====

Slurm Installation

- System operation: CentOS 7 or 8

- Define name of every server (hostname): master, node01.. nodeN

- Install database server (MariaDB)

- Create global users (munge user). Slurm and Munge require consistent UID and GID across every node in the cluster.

- Install Munge

- Install Slurm

Create the global user and group for Munge

For all the nodes before you install Slurm or Munge, you need create user and group using seem UID and GID:

export MUNGEUSER=991 groupadd -g $MUNGEUSER munge useradd -m -c "MUNGE Uid 'N' Gid Emporium" -d /var/lib/munge -u $MUNGEUSER -g munge -s /sbin/nologin munge

export SLURMUSER=992 groupadd -g $SLURMUSER slurm useradd -m -c "SLURM workload manager" -d /var/lib/slurm -u $SLURMUSER -g slurm -s /bin/bash slurm

Install Slurm dependencies

In every node we need install a few dependencies:

yum install openssl openssl-devel pam-devel numactl numactl-devel hwloc hwloc-devel lua lua-devel readline-devel rrdtool-devel ncurses-devel man2html libibmad libibumad perl-ExtUtils-MakeMaker -y

Install Munge

In every node we need install that:

We need to get the latest EPEL repository:

yum install epel-release yum update

For CentOS 8, we need edit file /etc/yum.repos.d/CentOS-PowerTools.repo, and enable repository

Change:

enable=0 by enable=1

- Update database repository:

yum update

After that, we can install Munge

yum install munge munge-libs munge-devel -y

In the server master, we need create Munge key and copy that to all another server

yum install rng-tools -y rngd -r /dev/urandom

Creating Munge key

/usr/sbin/create-munge-key -r dd if=/dev/urandom bs=1 count=1024 > /etc/munge/munge.key chown munge: /etc/munge/munge.key chmod 400 /etc/munge/munge.key

Copying Munge key to another server

scp /etc/munge/munge.key root@node01:/etc/munge scp /etc/munge/munge.key root@node02:/etc/munge . . . scp /etc/munge/munge.key root@nodeN:/etc/munge